Prerequisites To Writing Express APIs

Prerequisites To Writing Express APIs

This article will cover the basics of express from the perspective of a beginner without concerning it’s self with the underlying mechanisms and theory that underlies the application of the framework.

For starters, what is express JS¿

When introduced, node.js gave developers the chance to use JavaScript to write software that, up to that point, could only be written using lower level languages like C, C++, Java, Python…

In this tutorial we will cover how to write web services that can communicate with clients (the front end application) using JavaScript Object Notation (JSON).

- JavaScript is asynchronous, which allows us to take full advantage of the processor it’s running on. Taking full advantage of the processor is crucial because the node process will be running on a single CPU.

- Using JavaScript gives us access to the npm repository. This repository is the largest ecosystem of useful libraries (most of them free to use) in npm modules.

Objective 1 — explain what Node.js is and its core features

Overview

Traditionally, developers only used the JavaScript language in web browsers. But, in 2009, Node.js was unveiled, and with it, the developer tool kit expanded greatly. Node.js gave developers the chance to use JavaScript to write software that, up to that point, could only be written using C, C++, Java, Python, Ruby, C#, and the like.

We will use Node to write server code. Specifically, web services that can communicate with clients using the JavaScript Object Notation (JSON) format for data interchange.

Some of the advantages of using Node.js for writing server-side code are:

- Uses the same programming language (JavaScript) and paradigm for both client and server. Using the same language, we minimize context switching and make it easy to share code between the client and the server.

- JavaScript is single-threaded, which removes the complexity involved in handling multiple threads.

- JavaScript is asynchronous, which allows us to take full advantage of the processor it’s running on. Taking full advantage of the processor is crucial because the node process will be running on a single CPU.

- Using JavaScript gives us access to the npm repository. This repository is the largest ecosystem of useful libraries (most of them free to use) in npm modules.

Some of the disadvantages of using Node.js for writing server-side code are:

- By strictly using JavaScript on the server, we lose the ability to use the right tool (a particular language) for the job.

- Because JavaScript is single-threaded, we can’t take advantage of servers with multiple cores/processors.

- Because JavaScript is asynchronous, it is harder to learn for developers that have only worked with languages that default to synchronous operations that block the execution thread.

- In the npm repository, there are often too many packages that do the same thing. This excess of packages makes it harder to choose one and, in some cases, may introduce vulnerabilities into our code.

To write a simple web server with

Node.js:

-

Use Node’s

HTTPmodule to abstract away complex network-related operations. - Write the single request handler function to handle all requests to the server.

The request handler is a function that takes the

request coming

from the client and produces the

response. The

function takes two arguments: 1) an object representing the

request and 2)

an object representing the

response.

This process works, but the resulting code is verbose, even for the simplest of servers. Also, note that when using only Node.js to build a server, we use a single request handler function for all requests.

Follow Along

Using only Node.js, let’s write a simple web server that returns

a message. Create a folder for the server and add an

index.js file

inside.

Next, add the following code to the

index.js file:

const http = require("http"); // built in node.js module to handle http trafficconst hostname = "127.0.0.1"; // the local computer where the server is running

const port = 3000; // a port we'll use to watch for trafficconst server = http.createServer((req, res) => {

// creates our server

res.statusCode = 200; // http status code returned to the client

res.setHeader("Content-Type", "text/plain"); // inform the client that we'll be returning text

res.end("Hello World from Node\\n"); // end the request and send a response with the specified message

});server.listen(port, hostname, () => {

// start watching for connections on the port specified

console.log(`Server running at <http://$>{hostname}:${port}/`);

});

Now navigate to the folder in a terminal/console window and

type:

node index.js

to execute your file. A message that reads "Server running at http://127.0.0.1:3000" should be displayed, and the server is now waiting for

connections.

Open a browser and visit:

http://localhost:3000.

localhost and

the IP address

127.0.0.1 point

to the same thing: your local computer. The browser should show

the message: "Hello World from Node". There you have it, your first web server, built from

scratch using nothing but

Node.js.

Challenge

Write a paragraph about what

Node.js is and

explain at least 3 of its core features.

Objective 2 — explain what Express is and its core features

Overview

Node’s built-in

HTTP module

provides a powerful way to build web applications and services.

However, it requires a lot of code for everyday tasks like

sending an HTML page to the browser.

Introducing Express, a light and unopinionated framework that

sits on top of Node.js, making it easier to create web applications and services.

Sending an HTML file or image is now a one-line task with the

sendFile helper

method in

Express.

Ultimately, Express is just a Node.js module like any other module.

What can we do with Express? So many things! For example:

- Build web applications.

- Serve Single Page Applications (SPAs).

- Build RESTful web services that work with JSON.

- Serve static content, like HTML files, images, audio files, PDFs, and more.

- Power real-time applications using technologies like Web Sockets or WebRTC.

Some of the benefits of using Express are that it is:

- Simple

- Unopinionated

- Extensible

- Light-weight

-

Compatible with

connect middleware (Links to an external site.). This compatibility means we can tap into an extensive

collection of modules written for

connect. - All packaged into a clean, intuitive, and easy-to-use API.

- Abstracts away common tasks (writing web applications can be verbose, hence the need for a library like this).

Some of the drawbacks of Express are:

- It’s not a one-stop solution. Because of its simplicity, it does very little out of the box. Especially when compared to frameworks like Ruby on Rails and Django.

- We are forced to make more decisions due to the flexibility and control it provides.

Main Features of Express

Middleware functions are functions that have access to the request object ( req), the response object ( res), and the…expressjs.com

Middleware

Middleware functions can get the request and response objects, operate on them, and (when specified) trigger some action. Examples are logging or security.

Express’s middleware stack is an array of functions.

Middleware can change the request or response, but it doesn’t have to.

Routing

Routing is a way to select which request handler function is executed. It does so based on the URL visited and the HTTP method used. Routing provides a way to break an application into smaller parts.

Routers for Application Modularity

We can break up applications into routers. We could have a router to serve our SPA and another router for our API. Each router can have its own middleware and routing. This combination provides improved functionality.

Convenience Helpers

Express has many helpers that provide out of the box functionality to make writing web applications and API servers easier.

A lot of those helpers are extension methods added to the request and response objects.

Examples

from the Api Reference (Links to an external site.)

include:

response.redirect(),

response.status(),

response.send(), and

request.ip.

Views

Views provide a way to dynamically render HTML on the server and even generate it using other languages.

Follow Along

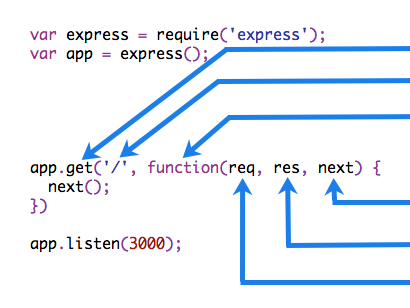

Let’s write our first server using Express:

-

Create a new file called

server.jsto host our server code. -

Type

npm init -yto generate apackage.json. -

Install the

expressnpm module using:npm install express.

Inside

server.js add

the following code:

const express = require('express'); // import the express packageconst server = express(); // creates the server// handle requests to the root of the api, the / route

server.get('/', (req, res) => {

res.send('Hello from Express');

});// watch for connections on port 5000

server.listen(5000, () =>

console.log('Server running on <http://localhost:5000>')

);

Run the server by typing:

node server.js

and visit

http://localhost:5000

in the browser.

To stop the server, type

Ctrl + c at the

terminal window.

Challenge

On your own: Write a paragraph about what Express is and explain how it is used in modern web application development.

Objective 3 — create an API that can respond to GET requests

Overview

In this overview, we’re walking through the steps necessary to

build a simple Web API that returns the string “Hello World” on

every incoming

GET request.

The program should return the string every time a request comes

into the root route ("/"). For now, you don't need

to code along, just read through the steps.

To make things easier, we’ll use an existing repository as the base for our API. Later in the week, as we learn more about Node.js and Express, we’ll create an API from scratch.

To build our first API, we will:

- clone the node-express-mini repository (Links to an external site.) to a folder on our computer.

-

Navigate into the folder using

cd. -

Use

npm installto download all dependencies. -

Add a file called

index.jsat the folder's root, next to thepackage.jsonfile. -

Open the

index.jsfile using our favorite code editor. - Add the following code:

// require the express npm module, needs to be added to the project using "npm install express"

const express = require('express');// creates an express application using the express module

const server = express();// configures our server to execute a function for every GET request to "/"

// the second argument passed to the .get() method is the "Route Handler Function"

// the route handler function will run on every GET request to "/"

server.get('/', (req, res) => {

// express will pass the request and response objects to this function

// the .send() on the response object can be used to send a response to the client

res.send('Hello World');

});// once the server is fully configured we can have it "listen" for connections on a particular "port"

// the callback function passed as the second argument will run once when the server starts

server.listen(8000, () => console.log('API running on port 8000'));

make sure to save your changes to index.js.

We are using the

express npm

module in our code, so we need to add it as a dependency to our

project. To do this:

- Open a terminal/console/command prompt window and navigate to the root of our project.

-

Add express to our

package.jsonfile by typingnpm install express.

Now we’re ready to test our API!

In the terminal, still at the root of our project:

-

Type:

npm run serverto run our API. The message "Api running on port 8000" should appear on the terminal. - Open a web browser and navigate to “http://localhost:8000".

There we have it, our first API!

A lot is going on in those few lines of code (only six lines if we remove the comments and white space). We will cover every piece of it in detail over the following modules, but here is a quick rundown of the most important concepts.

First, we used

require() to

import

the

express module

and make it available to our application.

require() is

similar to the

import keyword

we have used before. The line

const express = require('express');

is equivalent to

import express from 'express';

if we were using ES2015 syntax.

The following line creates our Express application. The return

of calling

express() is an

instance of an Express application that we can use to configure

our

server

and, eventually, start listening for and responding to requests.

Notice we use the word server, not API. An Express application

is generic, which means we can use it to serve static content

(HTML, CSS, audio, video, PDFs, and more). We can also use an

Express application to serve dynamically generated web pages,

build real-time communications servers, and more. We will use it

statically to accept requests from clients and respond with data

in JSON format.

An Express application publishes a set of methods we can use to

configure functions. We are using the .get()

method to set up a

route handler

function that will run on every

GET request. As

a part of this handler function, we specify the URL which will

trigger the request. In this case, the URL is the site's

root (represented by a

/). There are

also methods to handle the

POST,

PUT, and

DELETE HTTP

verbs.

The first two arguments passed by

express to a

route handler function are 1) an object representing the

request and 2)

an object representing the

response.

Express expands those objects with a set of useful properties

and methods. Our example uses the .send()

method of the response object to specify the data we will send

to the client as the response body. You can call the first two

arguments anything you want, but it is prevalent to see them

dubbed req and

res. We at

Lambda call them

the homies

as they always hang out together.

That’s all the configuring we need to do for this first example. We’ll see other ways of configuring our server as we go forward.

After configuring the server, it’s time to turn it on. We use

the .listen()

method to monitor a port on the computer for any incoming

connections and respond to those we have configured. Our server

will only respond to

GET requests

made to the

/ route on port

8000.

That’s it for our first Web API, and now it’s time for you to follow along as we add a new endpoint to our server that returns JSON data!

Follow Along

Let’s try returning JSON instead of just a simple string.

Please follow the steps outlined on the overview, but, to save

time, copy and paste the content of

index.js

instead of typing it. Then run your API through a browser to

make sure it works.

Now follow along as we code a new endpoint that returns an array of movie characters in JSON format.

The first step is to define a new

route handler to

respond to

GET requests at

the

/hobbits

endpoint.

server.get('/hobbits', (req, res) => {

// route handler code here

});Next, we define the return data that our endpoint will send back to the client. We do this inside the newly defined route handler function.

const hobbits = [

{

id: 1,

name: 'Samwise Gamgee',

},

{

id: 2,

name: 'Frodo Baggins',

},

];

Now we can return the

hobbits array.

We could use .send(hobbits)

as we did for the string on the

/ endpoint, but

this time we'll learn about two other useful methods we find

in the response object.

res.status(200).json(hobbits);

We should provide as much useful information as possible to the

clients using our API. One such piece of data is the

HTTP status code

that reflects the client's operation outcome. In this case,

the client is trying to get a list of a particular

resource, a

hobbits list.

Sending back a

200 OK status

code communicates to the client that the operation was

successful.

We will see other status codes as we continue to build new endpoints during this week. You can see a list by following this link to the documentation about HTTP Response Codes on the Mozilla Developer Network site (Links to an external site.).

We can use the .status()

method of the response object to send any valid

HTTP status code.

We are also chaining the .json()

method of the response object. We do this to communicate to both

the client making the request and the next developer working

with this code that we intend to send the data in

JSON format.

The complete code for

index.js should

now look like so:

const express = require('express');const server = express();server.get('/', (req, res) => {

res.send('Hello World');

});server.get('/hobbits', (req, res) => {

const hobbits = [

{

id: 1,

name: 'Samwise Gamgee',

},

{

id: 2,

name: 'Frodo Baggins',

},

]; res.status(200).json(hobbits);

});server.listen(8000, () => console.log('API running on port 8000'));

Now we can visit

http://localhost:8000/hobbits

in our browser, and we should get back our JSON array.

If you are using the Google Chrome browser, this extension (Links to an external site.) can format the JSON data in a more readable fashion.

Congratulations! You just built an API that can return data in JSON format.

Overview

Let’s look at a basic example of routing in action.

First, to make our Express application respond to

GET requests on

different URLs, add the following endpoints:

// this request handler executes when making a GET request to /about

server.get('/about', (req, res) => {

res.status(200).send('<h1>About Us</h1>');

});// this request handler executes when making a GET request to /contact

server.get('/contact', (req, res) => {

res.status(200).send('<h1>Contact Form</h1>');

});Two things to note:

- we are using the same HTTP Method on both endpoints, but express looks at the URL and executes the corresponding request handler.

- we can return a string with valid HTML!

Open a browser and navigate to the

/about and

/contact

routes. The appropriate route handler will execute.

Follow Along

Please follow along as we write endpoints that execute different request handlers on the same URL by changing the HTTP method.

Let’s start by adding the following code after the

GET endpoint to

/hobbits:

// this request handler executes when making a POST request to /hobbits

server.post('/hobbits', (req, res) => {

res.status(201).json({ url: '/hobbits', operation: 'POST' });

});

Note that we return HTTP status code 201 (created) for

successful

POST

operations.

Next, we need to add an endpoint for

PUT requests to

the same URL.

// this request handler executes when making a PUT request to /hobbits

server.put('/hobbits', (req, res) => {

res.status(200).json({ url: '/hobbits', operation: 'PUT' });

});

For successful

PUT operations,

we use HTTP Status Code 200 (OK).

Finally, let’s write an endpoint to handle

DELETE

requests.

// this request handler executes when making a DELETE request to /hobbits

server.delete('/hobbits', (req, res) => {

res.status(204);

});We are returning HTTP Status Code 204 (No Content). Suppose you are returning any data to the client, perhaps the removed resource, on successful deletes. In that case, you’d change that to be 200 instead.

You may have noticed that we are not reading any data from the

request, as that is something we’ll learn later in the module.

We are about to learn how to use a tool called

Postman to test

our POST,

PUT, and

DELETE

endpoints.

Overview

Reading and Using Route Parameters

Let’s revisit our

DELETE

endpoint.

server.delete('/hobbits', (req, res) => {

res.status(204);

});

How does the client let the API know which hobbit should be

deleted or updated? One way, the one we’ll use, is through

route parameters. Let's add support for route parameters to our

DELETE

endpoint.

We define route parameters by adding it to the URL with a colon

(:) in front of

it. Express adds it to the .params

property part of the request object. Let's see it in action:

server.delete('/hobbits/:id', (req, res) => {

const id = req.params.id;

// or we could destructure it like so: const { id } = req.params;

res.status(200).json({

url: `/hobbits/${id}`,

operation: `DELETE for hobbit with id ${id}`,

});

});

This route handler will execute every

DELETE for a

URL that begins with

/hobbits/

followed by any value. So,

DELETE requests

to

/hobbits/123

and

/hobbits/frodo

will both trigger this request handler. The value passed after

/hobbits/ will

end up as the

id property on

req.params.

The value for a route parameter will always be

string, even if

the value passed is numeric. When hitting

/hobbits/123 in

our example, the type of

req.params.id

will be string.

Express routing has support for multiple route parameters. For

example, defining a route URL that reads

/hobbits/:id/friends/:friendId, will add properties for

id and

friendId to

req.params.

Using the Query String

The query string is another strategy using the URL to pass

information from clients to the server. The query string is

structured as a set of key/value pairs. Each pair takes the form

of key=value,

and pairs are separated by an

&. To mark

the beginning of the query string, we add ?

and the end of the URL, followed by the set of key/value pairs.

An example of a query string would be:

https://www.google.com/search?q=lambda&tbo=1. The query string portion is ?q=lambda&tbo=1

and the key/value pairs are

q=lambda and

tbo=1.

Let’s add sorting capabilities to our API. We’ll provide a way

for clients to hit our

/hobbits and

pass the field they want to use to sort the responses, and our

API will sort the data by that field in ascending order.

Here’s the new code for the

GET /hobbits

endpoint:

server.get('/hobbits', (req, res) => {

// query string parameters get added to req.query

const sortField = req.query.sortby || 'id';

const hobbits = [

{

id: 1,

name: 'Samwise Gamgee',

},

{

id: 2,

name: 'Frodo Baggins',

},

]; // apply the sorting

const response = hobbits.sort(

(a, b) => (a[sortField] < b[sortField] ? -1 : 1)

); res.status(200).json(response);

});

Visit

localhost:8000/hobbits?sortby=name, and the list should be sorted by

name. Visit

localhost:8000/hobbits?sortby=id, and the list should now be sorted by

id. If no

sortby

parameter is provided, it should default to sorting by

id.

To read values from the query string, we use the

req.query

object added by Express. There will be a key and a value in the

req.query

object for each key/value pair found in the query string.

The parameter’s value will be of type

array if more

than one value is passed for the same key and

string when

only one value is passed. For example, in the query string ?id=123,

req.query.id

will be a string, but for ?id=123&id=234, it will be an array.

Another gotcha is that the names of query string parameters are

case sensitive,

sortby and

sortBy are two

different parameters.

The rest of the code sorts the array before sending it back to the client.

Reading Data from the Request Body

We begin by taking another look at the

POST /hobbits

endpoint. We need to read the hobbit's information to add it

to the

hobbits array.

Let's do that next:

// add this code right after const server = express();

server.use(express.json());let hobbits = [

{

id: 1,

name: 'Bilbo Baggins',

age: 111,

},

{

id: 2,

name: 'Frodo Baggins',

age: 33,

},

];

let nextId = 3;// and modify the post endpoint like so:

server.post('/hobbits', (req, res) => {

const hobbit = req.body;

hobbit.id = nextId++; hobbits.push(hobbit); res.status(201).json(hobbits);

});To make this work with the hobbits array, we first move it out of the get endpoint into the outer scope. Now we have access to it from all route handlers.

Then we define a variable for manual id generation. When using a database, this is not necessary as the database management system generates ids automatically.

To read data from the request body, we need to do two things:

-

Add the line:

server.use(express.json());after the express application has been created. - Read the data from the body property that Express adds to the request object. Express takes all the client’s information from the body and makes it available as a nice JavaScript object.

Note that we are skipping data validation to keep this demo simple, but in a production application, you would validate before attempting to save to the database.

Let’s test it using Postman:

- Change the method to POST.

-

Select the

Bodytab underneath the address bar. -

Click on the

rawradio button. -

From the dropdown menu to the right of the

binaryradio button, select `JSON (application/json). - Add the following JSON object as the body:

{

"name": "Samwise Gamgee",

"age": 30

}

Click on Send,

and the API should return the list of hobbits, including Sam!

Follow Along

Please code along as we implement the

PUT endpoint

and a way for the client to specify the sort direction.

Implement Update Functionality

Let’s continue practicing reading route parameters and information from the request body. Let’s take a look at our existing PUT endpoint:

server.put('/hobbits', (req, res) => {

res.status(200).json({ url: '/hobbits', operation: 'PUT' });

});We start by adding support for a route parameter the clients can use to specify the id of the hobbit they intend to update:

server.put('/hobbits/:id', (req, res) => {

res.status(200).json({ url: '/hobbits', operation: 'PUT' });

});

Next, we read the hobbit information from the request body using

req.body and

use it to update the existing hobbit.

server.put('/hobbits/:id', (req, res) => {

const hobbit = hobbits.find(h => h.id == req.params.id); if (!hobbit) {

res.status(404).json({ message: 'Hobbit does not exist' });

} else {

// modify the existing hobbit

Object.assign(hobbit, req.body); res.status(200).json(hobbit);

}

});

Concentrate on the code related to reading the

id from the

req.params

object and reading the hobbit information from

req.body. The

rest of the code will change as this is a simple example using

an in-memory array. Most production APIs will use a database.

Challenge

For the following exercises, use the provided database helper

functions inside

/data/db.js to

work with the database users.

Implement a

GET endpoint

that will accept an

id as a route

parameter and return the user corresponding to that

id. If the user

is not found, return the correct HTTP Status Code.

Implement all CRUD operations on users, including getting by ID.

Add sorting and pagination support to the GET all endpoint. For pagination, the client should supply the API with sorting information, the number of users per page, and the page number.

Overview

REST is a

generally agreed-upon set of principles and constraints. They

are

recommendations, not a standard.

// inside /api/apiRoutes.js <- this can be place anywhere and called anything

const express = require('express');

// if the other routers are not nested inside /api then the paths would change

const userRoutes = require('./users/userRoutes');

const productRoutes = require('./products/productRoutes');

const clientRoutes = require('./clients/clientRoutes');

const router = express.Router(); // notice the Uppercase R

// this file will only be used when the route begins with "/api"

// so we can remove that from the URLs, so "/api/users" becomes simply "/users"

router.use('/users', userRoutes);

router.use('/products', productRoutes);

router.use('/clients', clientRoutes);

// .. and any other endpoint related to the user's resource

// after the route has been fully configured, we then export it so it can be required where needed

module.exports = router; // standard convention dictates that this is the last line on the file

Objective 1 — explain the role of a foreign key

Overview

Foreign keys are a type of table field used for creating links between tables. Like primary keys, they are most often integers that identify (rather than store) data. However, whereas a primary key is used to id rows in a table, foreign keys are used to connect a record in one table to a record in a second table.

Follow Along

Consider the following

farms and

ranchers

tables.

The farm_id in

the

ranchers table

is an example of a

foreign key.

Each entry in the

farm_id

(foreign key) column corresponds to an

id (primary

key) in the

farms table.

This allows us to track which farm each rancher belongs to while

keeping the tables normalized.

If we could only see the

ranchers table,

we would know that John, Jane, and Jen all work together and

that Jim and Jay also work together. However, to know where any

of them work, we would need to look at the

farms table.

Challenge

Open SQLTryIT (Links to an external site.).

How many records in the products table belong to the category “confections”?

Objective 2 — query data from multiple tables

Now that we understand the basics of querying data from a single table, let’s move on to selecting data from multiple tables using JOIN operations.

Overview

We can use a

JOIN to combine

query data from multiple tables using a single

SELECT

statement.

There are different types of joins; some are listed below:

- inner joins.

- outer joins.

- left joins.

- right joins.

- cross joins.

- non-equality joins.

- self joins.

Using

joins requires

that the two tables of interest contain at least one field with

shared information. For example, if a

departments table has

an id field, and an

employee table has a

department_id field,

and the values that exist in the

id column of the

departments table live

in the

department_id field of

the employee table, we can use those fields to join both tables

like so:

select * from employees

join departments on employees.department_id = departments.id

This query will return the data from both tables for every

instance where the

ON condition is

true. If there are employees with no value for departmentid or where the value stored in the field does not correspond

to an existing id in the

departments

table, then that record will NOT be returned. In a similar

fashion, any records from the

departments

table that don't have an employee associated with them

will also be omitted from the results. Basically, if the

id* does not show as the value of department_id for an employee,

it won't be able to join.

We can shorten the condition by giving the table names an alias. This is a common practice. Below is the same example using aliases, picking which fields to return and sorting the results:

select d.id, d.name, e.id, e.first_name, e.last_name, e.salary

from employees as e

join departments as d

on e.department_id = d.id

order by d.name, e.last_nameNotice that we can take advantage of white space and indentation to make queries more readable.

There are several ways of writing joins, but the one shown here should work on all database management systems and avoid some pitfalls, so we recommend it.

The syntax for performing a similar join using Knex is as follows:

db('employees as e')

.join('departments as d', 'e.department_id', 'd.id')

.select('d.id', 'd.name', 'e.first_name', 'e.last_name', 'e.salary')Follow Along

A good explanation of how the different types of joins can be seen in this article from w3resource.com (Links to an external site.).

Challenge

Use this online tool (Links to an external site.) to write the following queries:

- list the products, including their category name.

- list the products, including the supplier name.

- list the products, including both the category name and supplier name.

What is SQL Joins?

An SQL JOIN clause combines rows from two or more tables. It creates a set of rows in a temporary table.

How to Join two tables in SQL?

A JOIN works on two or more tables if they have at least one common field and have a relationship between them.

JOIN keeps the base tables (structure and data) unchanged.

Join vs. Subquery

- JOINs are faster than a subquery and it is very rare that the opposite.

- In JOINs the RDBMS calculates an execution plan, that can predict, what data should be loaded and how much it will take to processed and as a result this process save some times, unlike the subquery there is no pre-process calculation and run all the queries and load all their data to do the processing.

- A JOIN is checked conditions first and then put it into table and displays; where as a subquery take separate temp table internally and checking condition.

- When joins are using, there should be connection between two or more than two tables and each table has a relation with other while subquery means query inside another query, has no need to relation, it works on columns and conditions.

SQL JOINS: EQUI JOIN and NON EQUI JOIN

The are two types of SQL JOINS — EQUI JOIN and NON EQUI JOIN

- SQL EQUI JOIN :

The SQL EQUI JOIN is a simple SQL join uses the equal sign(=) as the comparison operator for the condition. It has two types — SQL Outer join and SQL Inner join.

- SQL NON EQUI JOIN :

The SQL NON EQUI JOIN is a join uses comparison operator other than the equal sign like >, <, >=, <= with the condition.

SQL EQUI JOIN : INNER JOIN and OUTER JOIN

The SQL EQUI JOIN can be classified into two types — INNER JOIN and OUTER JOIN

- SQL INNER JOIN

This type of EQUI JOIN returns all rows from tables where the key record of one table is equal to the key records of another table.

- SQL OUTER JOIN

This type of EQUI JOIN returns all rows from one table and only those rows from the secondary table where the joined condition is satisfying i.e. the columns are equal in both tables.

In order to perform a JOIN query, the required information we need are:

a) The name of the tablesb) Name of the columns of two or more tables, based on which a condition will perform.

Syntax:

FROM table1

join_type table2

[ON (join_condition)]Parameters:

Pictorial Presentation of SQL Joins:

Example:

Sample table: company

Sample table: foods

To join two tables ‘company’ and ‘foods’, the following SQL statement can be used :

SQL Code:

SELECT company.company_id,company.company_name,

foods.item_id,foods.item_name

FROM company,foods;Copy

Output:

COMPAN COMPANY_NAME ITEM_ID ITEM_NAME

------ ------------------------- -------- ---------------

18 Order All 1 Chex Mix

18 Order All 6 Cheez-It

18 Order All 2 BN Biscuit

18 Order All 3 Mighty Munch

18 Order All 4 Pot Rice

18 Order All 5 Jaffa Cakes

18 Order All 7 Salt n Shake

15 Jack Hill Ltd 1 Chex Mix

15 Jack Hill Ltd 6 Cheez-It

15 Jack Hill Ltd 2 BN Biscuit

15 Jack Hill Ltd 3 Mighty Munch

15 Jack Hill Ltd 4 Pot Rice

15 Jack Hill Ltd 5 Jaffa Cakes

15 Jack Hill Ltd 7 Salt n Shake

16 Akas Foods 1 Chex Mix

16 Akas Foods 6 Cheez-It

16 Akas Foods 2 BN Biscuit

16 Akas Foods 3 Mighty Munch

16 Akas Foods 4 Pot Rice

16 Akas Foods 5 Jaffa Cakes

16 Akas Foods 7 Salt n Shake

.........

.........

.........JOINS: Relational Databases

Key points to remember:

Click on the following to get the slides presentation -

Practice SQL Exercises

- SQL Exercises, Practice, Solution

- SQL Retrieve data from tables [33 Exercises]

- SQL Boolean and Relational operators [12 Exercises]

- SQL Wildcard and Special operators [22 Exercises]

- SQL Aggregate Functions [25 Exercises]

- SQL Formatting query output [10 Exercises]

- SQL Quering on Multiple Tables [7 Exercises]

- FILTERING and SORTING on HR Database [38 Exercises]

- SQL JOINS

- SQL JOINS [29 Exercises]

- SQL JOINS on HR Database [27 Exercises]

-

- SQL SUBQUERIES

- SQL SUBQUERIES [39 Exercises]

- SQL SUBQUERIES on HR Database [55 Exercises]

-

- SQL Union[9 Exercises]

- SQL View[16 Exercises]

- SQL User Account Management [16 Exercise]

- Movie Database

- BASIC queries on movie Database [10 Exercises]

- SUBQUERIES on movie Database [16 Exercises]

- JOINS on movie Database [24 Exercises]

-

- Soccer Database

- Introduction

- BASIC queries on soccer Database [29 Exercises]

- SUBQUERIES on soccer Database [33 Exercises]

- JOINS queries on soccer Database [61 Exercises]

-

- Hospital Database

- Introduction

- BASIC, SUBQUERIES, and JOINS [39 Exercises]

-

- Employee Database

- BASIC queries on employee Database [115 Exercises]

- SUBQUERIES on employee Database [77 Exercises]

-

- More to come!

Objective 3 — write database access methods

Overview

While we can write database code directly into our endpoints, best practices dictate that all database logic exists in separate, modular methods. These files containing database access helpers are often called models

Follow Along

To handle CRUD operations for a single resource, we would want to create a model (or database access file) containing the following methods:

function find() {

}function findById(id) {

}function add(user) {

}function update(changes, id) {

}function remove(id) {

}Each of these functions would use Knex logic to perform the necessary database operation.

function find() {

return db('users');

}

For each method, we can choose what value to return. For

example, we may prefer

findById() to

return a single

user object

rather than an array.

function findById(id) {

// first() returns the first entry in the db matching the query

return db('users').where({ id }).first();

}

We can also use existing methods like

findById() to

help

add() return

the new user (instead of just the id).

function add(user) {

db('users').insert(user)

.then(ids => {

return findById(ids[0]);

});

}Once all methods are written as desired, we can export them like so:

module.exports = {

find,

findById,

add,

update,

delete,

}…and use the helpers in our endpoints

const User = require('./user-model.js');router.get('/', (req, res) => {

User.find()

.then(users => {

res.json(users);

})

.catch( err => {});

});

There should no be

knex code in

the endpoints themselves.

A database is a collection of data organized for easy retrieval and manipulation.

We’re concerned only with digital databases, those that run on computers or other electronic devices. Digital databases have been around since the 1960s. Relational databases, those which store “related” data, are the oldest and most common type of database in use today.

Data Persistence

A database is often necessary because our application or code requires data persistence. This term refers to data that is infrequently accessed and not likely to be modified. In less technical terms, the information will be safely stored and remain untouched unless intentionally modified.

A familiar example of non-persistent data would be JavaScript objects and arrays, which reset each time the code runs.

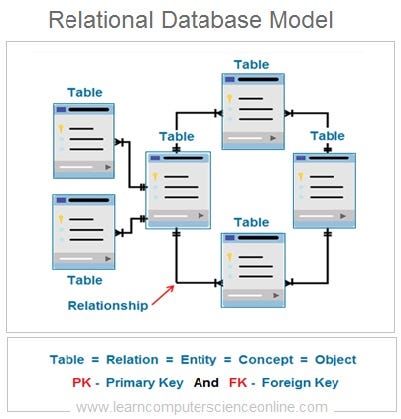

Relational Databases

In relational databases, the data is stored in tabular format grouped into rows and columns (similar to spreadsheets). A collection of rows is called a table. Each row represents a single record in the table and is made up of one or more columns.

These kinds of databases are relational because a relation is a mathematical idea equivalent to a table. So relational databases are databases that store their data in tables.

Tables

Below are some basic facts about tables:

Tables organize data in rows and columns.

Each row in a table represents one distinct record.

Each column represents a field or attribute that is common to all the records.

Fields should have a descriptive name and a data type appropriate for the attribute it represents.

Tables usually have more rows than columns.

Tables have primary keys that uniquely identify each row.

Foreign keys represent the relationships with other tables.

SQL:

SQL is a standard language, which means that it almost certainly will be supported, no matter how your database is managed. That said, be aware that the SQL language can vary depending on database management tools. This lesson focuses on a set of core commands that never change. Learning the standard commands is an excellent introduction since the knowledge transfers between database products.

The syntax for SQL is English-like and requires fewer symbols than programming languages like C, Java, and JavaScript.

It is declarative and concise, which means there is a lot less to learn to use it effectively.

When learning SQL, it is helpful to understand that each command is designed for a different purpose. If we classify the commands by purpose, we’ll end up with the following sub-categories of SQL:

-

Data Definition Language (DDL): used to modify database objects. Some examples are:

CREATE TABLE,ALTER TABLE, andDROP TABLE. -

Data Manipulation Language (DML): used to manipulate the data stored in the database. Some

examples are:

INSERT,UPDATE, andDELETE. -

Data Query Language (DQL): used to ask questions about the data stored in the

database. The most commonly used SQL command is

SELECT, and it falls in this category. -

Data Control Language (DCL): used to manage database security and user’s access to data.

These commands fall into the realm of Database Administrators.

Some examples are

GRANTandREVOKE. -

Transaction Control Commands: used for managing groups of statements that must execute as

a unit or not execute at all. Examples are

COMMITandROLLBACK.

As a developer, you’ll need to get familiar with DDL and become proficient using DML and DQL. This lesson will cover only DML and DQL commands.

The four SQL operations covered in this section will allow a user to query, insert, and modify a database table.

Query

A

query

is a SQL statement used to retrieve data from a database. The

command used to write queries is

SELECT, and it

is one of the most commonly used SQL commands.

The basic syntax for a

SELECT

statement is this:

select <selection> from <table name>;

To see all the fields on a table, we can use a

* as the

selection.

select * from employees;

The preceding statement would show all the records and all the

columns for each record in the

employees

table.

To pick the fields we want to see, we use a comma-separated list:

select first_name, last_name, salary from employees;The return of that statement would hold all records from the listed fields.

We can extend the

SELECT

command's capabilities using

clauses for

things like filtering, sorting, pagination, and more.

It is possible to query multiple tables in a single query. But, in this section, we only perform queries on a single table. We will cover performing queries on multiple tables in another section.

Insert

To

insert

new data into a table, we’ll use the

INSERT command.

The basic syntax for an

INSERT

statement is this:

insert into <table name> (<selection>) values (<values>)Using this formula, we can specify which values will be inserted into which fields like so:

insert into Customers (Country, CustomerName, ContactName, Address, City, PostalCode)

values ('USA', 'WebDev School', 'Austen Allred', '1 WebDev Court', 'Provo', '84601');Modify

Modifying

a database consists of updating and removing records. For these

operations, we’ll use

UPDATE and

DELETE

commands, respectively.

The basic syntax for an

UPDATE

statement is:

update <table name> set <field> = <value> where <condition>;

The basic syntax for a

DELETE

statement is:

delete from <table name> where <condition>;

Filtering results using WHERE clause

When querying a database, the default result will be every entry in the given table. However, often, we are looking for a specific record or a set of records that meets certain criteria.

A WHERE clause

can help in both cases.

Here’s an example where we might only want to find customers living in Berlin.

select City, CustomerName, ContactName

from Customers

where City = 'Berlin'

We can also chain together

WHERE clauses

using OR and

AND to limit

our results further.

The following query includes only records that match both criteria.

select City, CustomerName, ContactName

from Customers

where Country = 'France' and City = 'Paris'And this query includes records that match either criteria.

select City, CustomerName, ContactName

from Customers

where Country = 'France' or City = 'Paris'These operators can be combined and grouped with parentheses to add complex selection logic. They behave similarly to what you’re used to in programming languages.

You can read more about SQLite operators from w3resource (Links to an external site.).

To select a single record, we can use a

WHERE statement

with a uniquely identifying field, like an id:

select * from Customers

where CustomerId=3;

Other comparison operators also work in

WHERE

conditions, such as

>,

<,

<=, and

>=.

select * from employees where salary >= 50000Ordering results using the ORDER BY clause

Query results are shown in the same order the data was inserted.

To control how the data is sorted, we can use the

ORDER BY

clause. Let's see an example.

-- sorts the results first by salary in descending order, then by the last name in ascending order

select * from employees order by salary desc, last_name;

We can pass a list of field names to

order by and

optionally choose

asc or

desc for the

sort direction. The default is

asc, so it

doesn't need to be specified.

Some SQL engines also support using field abbreviations when sorting.

select name, salary, department from employees order by 3, 2 desc;

In this case, the results are sorted by the department in

ascending order first and then by salary in descending order.

The numbers refer to the fields’ position in the

selection portion of

the query, so

1 would be

name,

2 would be

salary, and so on.

Note that the

WHERE clause

should come after the

FROM clause.

The

ORDER BY clause

always goes last.

select * from employees where salary > 50000 order by last_name;Limiting results using the LIMIT clause

When we wish to see only a limited number of records, we can use

a LIMIT clause.

The following returns the first ten records in the products table:

select * from products

limit 10

LIMIT clauses

are often used in conjunction with

ORDER BY. The

following shows us the five cheapest products:

select * from products

order by price desc

limit 5Inserting data using INSERT

An insert statement adds a new record to the database. All

non-null fields must be listed out in the same order as their

values. Some fields, like ids and timestamps, may be

auto-generated and do not need to be included in an

INSERT

statement.

-- we can add fields in any order; the values need to be in the same ordinal position

-- the id will be assigned automatically

insert into Customers (Country, CustomerName, ContactName, Address, City, PostalCode)

values ('USA', 'WebDev School', 'Austen Allred', '1 WebDev Court', 'Provo', '84601');The values in an insert statement must not violate any restrictions and constraints that the database has in place, such as expected datatypes. We will learn more about constraints and schema design in a later section.

Modifying recording using UPDATE

When modifying a record, we identify a single record or a set of

records to update using a

WHERE clause.

Then we can set the new value(s) in place.

update Customers

set City = 'Silicon Valley', Country = 'USA'

where CustomerName = 'WebDev School'

Technically the

WHERE clause is

not required, but leaving it off would result in every record

within the table receiving the update.

Removing records using DELETE

When removing a record or set of records, we need only identify

which record(s) to remove using a

WHERE clause:

delete from Customers

where CustomerName = 'WebDev School`;

Once again, the

WHERE clause is

not required, but leaving it off would remove every record in

the table, so it's essential.

Raw SQL is a critical baseline skill. However, Node developers generally use an Object Relational Mapper (ORM) or query builder to write database commands in a backend codebase. Both ORMs and query builders are JavaScript libraries that allow us to interface with the database using a JavaScript version of the SQL language.

For example, instead of a raw SQL

SELECT:

SELECT * FROM users;We could use a query builder to write the same logic in JavaScript:

db.select('*').from('users');Query builders are lightweight and easy to get off the ground, whereas ORMs use an object-oriented model and provide more heavy lifting within their rigid structure.

We will use a query builder called knex.js (Links to an external site.).

Knex Setup

To use Knex in a repository, we’ll need to add two libraries:

npm install knex sqlite3

knex is our

query builder library, and

sqlite3 allows

us to interface with a

sqlite

database. We'll learn more about

sqlite and

other

database management systems

in the following module. For now, know that you need both

libraries.

Next, we use Knex to set up a config file:

const knex = require('knex');const config = {

client: 'sqlite3',

connection: {

filename: './data/posts.db3',

},

useNullAsDefault: true,

};module.exports = knex(config);

To use the query builder elsewhere in our code, we need to call

knex and pass

in a

config object.

We'll be discussing Knex configuration more in a future

module. Still, we only need the

client,

connection, and

useNullAsDefault

keys as shown above. The

filename should

point towards the pre-existing database file, which can be

recognized by the .db3

extension.

GOTCHA: The file path to the database should be with respect to the root of the repo, not the configuration file itself.

Once Knex is configured, we can import the above config file anywhere in our codebase to access the database.

const db = require('../data/db-config.js);

The db object

provides methods that allow us to begin building queries.

SELECT using Knex

In Knex, the equivalent of

SELECT * FROM users

is:

db.select('*').from('users');There’s a simpler way to write the same command:

db('users');

Using this, we could write a

GET endpoint.

router.get('/api/users', (req, res) => {

db('users')

.then(users => {

res.json(users);

})

.catch (err => {

res.status(500).json({ message: 'Failed to get users' });

});

});NOTE: All Knex queries return promises.

Knex also allows for a where clause. In Knex, we could write

SELECT * FROM users WHERE id=1

as

db('users').where({ id: 1 });

This method will resolve to an array containing a single entry

like so:

[{ id: 1, name: 'bill' }].

Using this, we might add a

GET endpoint

where a specific user:

server.get('api/users/:id', (req, res) => {

const { id } = req.params; db('users').where({ id })

.then(users => {

// we must check the length to find our if our user exists

if (users.length) {

res.json(users);

} else {

res.status(404).json({ message: 'Could not find user with given id.' })

})

.catch (err => {

res.status(500).json({ message: 'Failed to get user' });

});

});INSERT using Knex

In Knex, the equivalent of

INSERT INTO users (name, age) VALUES ('Eva',

32)

is:

db('users').insert({ name: 'Eva', age: 32 });

The insert method in Knex will resolve to an array containing

the newly created id for that user like so:

[3].

UPDATE using Knex

In knex, the equivalent of

UPDATE users SET name='Ava', age=33 WHERE id=3;

is:

db('users').where({ id: 3 })

.update({name: 'Ava', age: 33 });

Note that the

where method

comes before

update, unlike

in SQL.

Update will resolve to a count of rows updated.

DELETE using Knex

In Knex, the equivalent of

DELETE FROM users WHERE age=33;

is:

db('users').where({ age: 33}).del();

Once again, the

where must come

before the del.

This method will resolve to a count of records removed.

Here’s a small project you can practice with.

SQLlite Studio is an application that allows us to create, open, view, and modify SQLite databases. To fully understand what SQLite Studio is and how it works, we must also understand the concept of the Database Management Systems (DBMS).

What is a DBMS?

To manage digital databases we use specialized software called DataBase Management Systems (DBMS). These systems typically run on servers and are managed by DataBase Administrators (DBAs).

In less technical terms, we need a type of software that will allow us to create, access, and generally manage our databases. In the world of relational databases, we specifically use Relational Database Mangement Systems (RDBMs). Some examples are Postgres, SQLite, MySQL, and Oracle.

Choosing a DBMS determines everything from how you set up your database, to where and how the data is stored, to what SQL commands you can use. Most systems share the core of the SQL language that you’ve already learned.

In other words, you can expect

SELECT,

UPDATE,

INSERT,

WHERE , and the

like to be the same across all DBMSs, but the subtleties of the

language may vary.

What is SQLite?

SQLite is the DBMS, as the name suggests, it is a more lightweight system and thus easier to get set up than some others.

SQLite allows us to store databases as single files. SQLite

projects have a .db3

extension. That is the database.

SQLite is not a database (like relational, graph, or document are databases) but rather a database management system.

Opening an existing database in SQLite Studio

One useful visual interface we might use with a SQLite database is called SQLite Studio. Install SQLITE Studio here. (Links to an external site.)

Once installed, we can use SQLite Studio to open any .db3

file from a previous lesson. We may view the tables, view the

data, and even make changes to the database.

For a more detailed look at SQLite Studio, follow along in the video above.

A database schema is the shape of our database. It defines what tables we’ll have, which columns should exist within the tables and any restrictions on each column.

A well-designed database schema keeps the data well organized and can help ensure high-quality data.

Note that while schema design is usually left to Database Administrators (DBAs), understanding schema helps when designing APIs and database logic. And in a smaller team, this step may fall on the developer.

For a look at schema design in SQLite Studio, follow along in the video above.

When designing a single table, we need to ask three things:

- What fields (or columns) are present?

- What type of data do we expect for each field?

- Are there other restrictions needed for each column?

Looking at the following schema diagram for an

accounts table,

we can the answer to each other those questions:

Table Fields

Choosing which fields to include in a table is relatively straight forward. What information needs to be tracked regarding this resource? In the real world, this is determined by the intended use of the product or app.

However, this is one requirement every table should satisfy: a

primary key. A primary key is a way to identify each entry in the database

uniquely. It is most often represented as a auto-incrementing

integer called

id or

[tablename]Id.

Datatypes

Each field must also have a specified datatype. The datatype available depends on our DBMS. Some supported datatype in SQLite include:

- Null: Missing or unknown information.

- Integer: Whole numbers.

- Real: Any number, including decimals.

- Text: Character data.

- Blob: a large binary object that can be used to store miscellaneous data.

Any data inserted into the table must match the datatypes determined in schema design.

Constraints

Beyond datatypes, we may add additional constraints on each field. Some examples include:

- Not Null: The field cannot be left empty

- Unique: No two records can have the same value in this field

- Primary key: — Indicates this field is the primary key. Both the not null and unique constraints will be enforced.

- Default: — Sets a default value if none is provided.

As with data types, any data that does not satisfy the schema constraints will be rejected from the database.

Multi-Table Design

Another critical component of schema design is to understand how different tables relate to each other. This will be covered in later lesson.

Knex provides a schema builder, which allows us to write code to design our database schema. However, beyond thinking about columns and constraints, we must also consider updates.

When a schema needs to be updated, a developer must feel confident that the changes go into effect everywhere. This means schema updates on the developer’s local machine, on any testing or staging versions, on the production database, and then on any other developer’s local machines. This is where migrations come into play.

A

database migration

describes changes made to the structure of a database.

Migrations include things like adding new objects, adding new

tables, and modifying existing objects or tables.

Knex Cli

To use migrations (and to make Knex setup easier), we need to

use

knex cli. Install knex globally with

npm install -g knex.

This allows you to use Knex commands within any repo that has

knex as a local

dependency. If you have any issues with this global install, you

can use the

npx knex

command instead.

Initializing Knex

To start, add the

knex and

sqlite3

libraries to your repository.

npm install knex sqlite3

We’ve seen how to use manually create a config object to get started with Knex, but the best practice is to use the following command:

knex initOr, if Knex isn’t globally installed:

npx knex init

This command will generate a file in your root folder called

knexfile.js. It

will be auto populated with three config objects, based on

different environments. We can delete all except for the

development object.

module.exports = { development: {

client: 'sqlite3',

connection: {

filename: './dev.sqlite3'

}

}};

We’ll need to update the location (or desired location) of the

database as well as add the

useNullAsDefault

option. The latter option prevents crashes when working with

sqlite3.

module.exports = { development: {

// our DBMS driver

client: 'sqlite3',

// the location of our db

connection: {

filename: './data/database_file.db3',

},

// necessary when using sqlite3

useNullAsDefault: true

}};Now, wherever we configure our database, we may use the following syntax instead of hardcoding in a config object.

const knex = require('knex');const config = require('../knexfile.js');// we must select the development object from our knexfile

const db = knex(config.development);// export for use in codebase

module.exports = db;Knex Migrations

Once our

knexfile is set

up, we can begin creating

migrations. Though it's not required, we are going to add an

addition option

to the config object to specify a directory for the migration

files.

development: {

client: 'sqlite3',

connection: {

filename: './data/produce.db3',

},

useNullAsDefault: true,

// generates migration files in a data/migrations/ folder

migrations: {

directory: './data/migrations'

}

}We can generate a new migration with the following command:

knex migrate:make [migration-name]

If we needed to create an accounts table, we might run:

knex migrate:make create-accounts

Note that inside

data/migrations/

a new file has appeared. Migrations have a timestamp in their

filenames automatically. Wither you like this or not,

do not edit migration names.

The migration file should have both an

up and a

down function.

Within the

up function, we

write the ended database changes. Within the

down function,

we write the code to undo the

up functions.

This allows us to undo any changes made to the schema if

necessary.

exports.up = function(knex, Promise) {

// don't forget the return statement

return knex.schema.createTable('accounts', tbl => {

// creates a primary key called id

tbl.increments();

// creates a text field called name which is both required and unique

tbl.text('name', 128).unique().notNullable();

// creates a numeric field called budget which is required

tbl.decimal('budget').notNullable();

});

};exports.down = function(knex, Promise) {

// drops the entire table

return knex.schema.dropTableIfExists('accounts');

};References for these methods are found in the schema builder section of the Knex docs (Links to an external site.).

At this point, the table is not yet created. To run this (and any other) migrations, use the command:

knex migrate:latest

Note if the database does not exist, this command will auto-generate one. We can use SQLite Studio to confirm that the accounts table has been created.

Changes and Rollbacks

If later down the road, we realize you need to update your schema, you shouldn’t edit the migration file. Instead, you will want to create a new migration with the command:

knex migrate:make accounts-schema-update

Once we’ve written our updates into this file we save and close with:

knex migrate:latest

If we migrate our database and then quickly realize something isn’t right, we can edit the migration file. However, first, we need to rolllback (or undo) our last migration with:

knex migrate:rollback

Finally, we are free to rerun that file with

knex migrate

latest.

NOTE: A rollback should not be used to edit an old migration file once that file has accepted into a main branch. However, an entire team may use a rollback to return to a previous version of a database.

Overview

Knex provides a schema builder, which allows us to write code to design our database schema. However, beyond thinking about columns and constraints, we must also consider updates.

When a schema needs to be updated, a developer must feel confident that the changes go into effect everywhere. This means schema updates on the developer’s local machine, on any testing or staging versions, on the production database, and then on any other developer’s local machines. This is where migrations come into play.

A

database migration

describes changes made to the structure of a database.

Migrations include things like adding new objects, adding new

tables, and modifying existing objects or tables.

Knex Cli

To use migrations (and to make Knex setup easier), we need to

use

knex cli. Install knex globally with

npm install -g knex.

This allows you to use Knex commands within any repo that has

knex as a local

dependency. If you have any issues with this global install, you

can use the

npx knex

command instead.

Initializing Knex

To start, add the

knex and

sqlite3

libraries to your repository.

npm install knex sqlite3

We’ve seen how to use manually create a config object to get started with Knex, but the best practice is to use the following command:

knex initOr, if Knex isn’t globally installed:

npx knex init

This command will generate a file in your root folder called

knexfile.js. It

will be auto populated with three config objects, based on

different environments. We can delete all except for the

development object.

module.exports = { development: {

client: 'sqlite3',

connection: {

filename: './dev.sqlite3'

}

}};

We’ll need to update the location (or desired location) of the

database as well as add the

useNullAsDefault

option. The latter option prevents crashes when working with

sqlite3.

module.exports = { development: {

// our DBMS driver

client: 'sqlite3',

// the location of our db

connection: {

filename: './data/database_file.db3',

},

// necessary when using sqlite3

useNullAsDefault: true

}};Now, wherever we configure our database, we may use the following syntax instead of hardcoding in a config object.

const knex = require('knex');const config = require('../knexfile.js');// we must select the development object from our knexfile

const db = knex(config.development);// export for use in codebase

module.exports = db;Knex Migrations

Once our

knexfile is set

up, we can begin creating

migrations. Though it's not required, we are going to add an

addition option

to the config object to specify a directory for the migration

files.

development: {

client: 'sqlite3',

connection: {

filename: './data/produce.db3',

},

useNullAsDefault: true,

// generates migration files in a data/migrations/ folder

migrations: {

directory: './data/migrations'

}

}We can generate a new migration with the following command:

knex migrate:make [migration-name]

If we needed to create an accounts table, we might run:

knex migrate:make create-accounts

Note that inside

data/migrations/

a new file has appeared. Migrations have a timestamp in their

filenames automatically. Wither you like this or not,

do not edit migration names.

The migration file should have both an

up and a

down function.

Within the

up function, we

write the ended database changes. Within the

down function,

we write the code to undo the

up functions.

This allows us to undo any changes made to the schema if

necessary.

exports.up = function(knex, Promise) {

// don't forget the return statement

return knex.schema.createTable('accounts', tbl => {

// creates a primary key called id

tbl.increments();

// creates a text field called name which is both required and unique

tbl.text('name', 128).unique().notNullable();

// creates a numeric field called budget which is required

tbl.decimal('budget').notNullable();

});

};exports.down = function(knex, Promise) {

// drops the entire table

return knex.schema.dropTableIfExists('accounts');

};References for these methods are found in the schema builder section of the Knex docs (Links to an external site.).

At this point, the table is not yet created. To run this (and any other) migrations, use the command:

knex migrate:latest

Note if the database does not exist, this command will auto-generate one. We can use SQLite Studio to confirm that the accounts table has been created.

Changes and Rollbacks

If later down the road, we realize you need to update your schema, you shouldn’t edit the migration file. Instead, you will want to create a new migration with the command:

knex migrate:make accounts-schema-update

Once we’ve written our updates into this file we save and close with:

knex migrate:latest

If we migrate our database and then quickly realize something isn’t right, we can edit the migration file. However, first, we need to rolllback (or undo) our last migration with:

knex migrate:rollback

Finally, we are free to rerun that file with

knex migrate

latest.

NOTE: A rollback should not be used to edit an old migration file once that file has accepted into a main branch. However, an entire team may use a rollback to return to a previous version of a database.

Overview

Often we want to pre-populate our database with sample data for testing. Seeds allow us to add and reset sample data easily.

Follow Along

The Knex command-line tool offers a way to seed our database; in other words, pre-populate our tables.

Similarly to migrations, we want to customize where our seed

files are generated using our

knexfile

development: {

client: 'sqlite3',

connection: {

filename: './data/produce.db3',

},

useNullAsDefault: true,

// generates migration files in a data/migrations/ folder

migrations: {

directory: './data/migrations'

},

seeds: {

directory: './data/seeds'

}

}

To create a seed run:

knex seed:make 001-seedName

Numbering is a good idea because Knex doesn’t attach a timestamp to the name like migrate does. Adding numbers to the file name, we can control the order in which they run.

We want to create seeds for our accounts table:

knex seed:make 001-accounts

A file will appear in the designated seed folder.

exports.seed = function(knex, Promise) {

// we want to remove all data before seeding

// truncate will reset the primary key each time

return knex('accounts').truncate()

.then(function () {

// add data into insert

return knex('accounts').insert([

{ name: 'Stephenson', budget: 10000 },

{ name: 'Gordon & Gale', budget: 40400 },

]);

});

};Run the seed files by typing:

knex seed:run

You can now use SQLite Studio to confirm that the accounts table has two entries.

SQL & PostgreSQL

Foreign keys are a type of table field used for creating links between tables. Like primary keys, they are most often integers that identify (rather than store) data. However, whereas a primary key is used to id rows in a table, foreign keys are used to connect a record in one table to a record in a second table.

Consider the following

farms and

ranchers

tables.

The farm_id in

the

ranchers table

is an example of a

foreign key.

Each entry in the

farm_id

(foreign key) column corresponds to an

id (primary

key) in the

farms table.

This allows us to track which farm each rancher belongs to while

keeping the tables normalized.

If we could only see the

ranchers table,

we would know that John, Jane, and Jen all work together and

that Jim and Jay also work together. However, to know where any

of them work, we would need to look at the

farms table.

Now that we understand the basics of querying data from a single table, let’s move on to selecting data from multiple tables using JOIN operations.

Overview

We can use a

JOIN to combine

query data from multiple tables using a single

SELECT

statement.

There are different types of joins; some are listed below:

- inner joins.

- outer joins.

- left joins.

- right joins.

- cross joins.

- non-equality joins.

- self joins.

Using

joins requires

that the two tables of interest contain at least one field with

shared information. For example, if a

departments table has

an id field, and an

employee table has a

department_id field,

and the values that exist in the

id column of the

departments table live

in the

department_id field of

the employee table, we can use those fields to join both tables

like so:

select * from employees

join departments on employees.department_id = departments.id

This query will return the data from both tables for every

instance where the

ON condition is

true. If there are employees with no value for departmentid or where the value stored in the field does not correspond

to an existing id in the

departments

table, then that record will NOT be returned. In a similar

fashion, any records from the

departments

table that don't have an employee associated with them

will also be omitted from the results. Basically, if the

id* does not show as the value of department_id for an employee,

it won't be able to join.

We can shorten the condition by giving the table names an alias. This is a common practice. Below is the same example using aliases, picking which fields to return and sorting the results:

select d.id, d.name, e.id, e.first_name, e.last_name, e.salary

from employees as e

join departments as d

on e.department_id = d.id

order by d.name, e.last_nameNotice that we can take advantage of white space and indentation to make queries more readable.

There are several ways of writing joins, but the one shown here should work on all database management systems and avoid some pitfalls, so we recommend it.

The syntax for performing a similar join using Knex is as follows:

db('employees as e')

.join('departments as d', 'e.department_id', 'd.id')

.select('d.id', 'd.name', 'e.first_name', 'e.last_name', 'e.salary')Follow Along